Processing Incoming Data into Actionable Reports

Applying a PageRank principle to unknown information

Context:

Organizing Dynamic Data

Have you ever dealt with dynamic data that changes daily, monthly, or quarterly? Each day can bring a completely new dataset, and keeping track of it all can feel overwhelming—especially when you need to plan your activities and timeline based on incoming information.

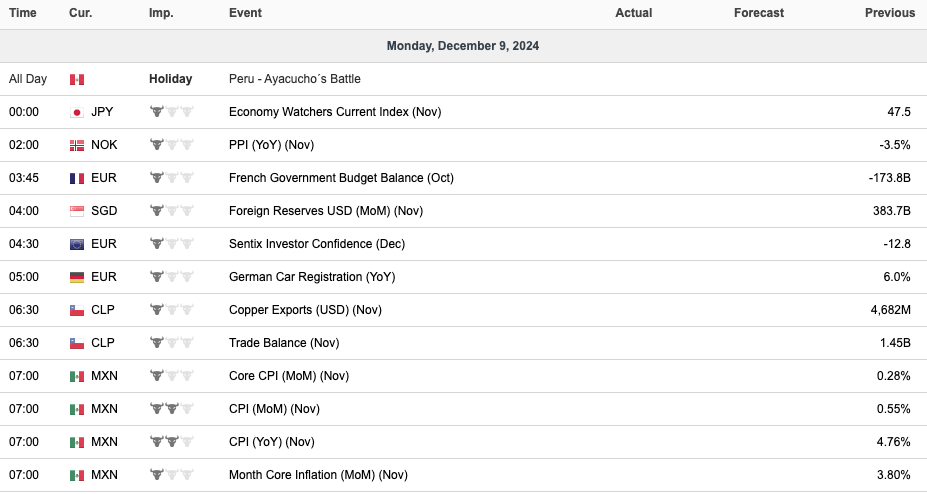

Take the international economy, for example. Before the business day begins, you might already have over 16 new global indicators to process. Some will exceed market forecasts, while others fall short. This pattern repeats daily, with different indicators being released every hour.

PageRank

How Can We Organize This Chaos?

The simplest inspiration comes from the PageRank algorithm, originally developed by Google to rank web pages. While Google has since evolved its ranking methods, the foundational concept remains powerful.

In a nutshell, PageRank assigns a score of importance to each page, based on the principle that a page becomes more important if other important pages link to it. It’s a recursive system: the importance of a page is determined by the importance of the pages linking to it.As Eric Roberts from Stanford describes, you can imagine a random surfer clicking through links on the web. The PageRank of any given page is the probability that the surfer will land on it. Pages with more incoming links from important sources are more likely to attract the surfer, and thus rank higher in search results. You can find a link to his slides here.

This same logic can be applied to dynamic data. While PageRank used hyperlinks as the core measure for cataloging the early internet, we can adapt its principles to organize economic indicators—leveraging domain knowledge about the data to create a meaningful ranking system.

Application

Applying PageRank Principles to Economic Data

To make sense of chaotic economic data, we need to answer some key questions about the information:

- Do we know the frequency of the data release?

- How valuable is this information each time it’s updated?

- Are there other characteristics that help predict its relevance and usage?

- Can we generate metrics that improve our summaries of incoming data?

Answering these questions with confidence allows us to rank the data effectively. This, in turn, enables more efficient querying and retrieval of relevant information, whether it’s data that’s about to be released or historical data for comparison.

Let’s use an example. Below is a lookup table that synthesizes the importance of different economic indicators for workflow planning.

| Country | Data | Coming Up Next Week | Importance for Workflow |

|---|---|---|---|

| USA | Employment | Yes (1) | Very High (5) |

| USA | Factory Orders | Yes (1) | Medium (3) |

| USA | Consumer Confidence | No (0) | High (4) |

| EU | Consumer Confidence | Yes (1) | High (4) |

| GER | Factory Orders | Yes (1) | Low (1) |

| CHN | Aggregate Social Finance | No (0) | Very High (5) |

In this table:

“Coming Up Next Week” is a binary indicator of whether the data will be released soon. “Importance for Workflow” reflects how critical the information is for decision-making. By combining these metrics, we calculate a final score (e.g., by multiplying the two values) to prioritize incoming data.

Python Example

From Manual Tracking to Automation

Here’s how Python can be used to streamline this process. First, create a DataFrame with your lookup table and another DataFrame holding the forecast, expected, and previous values of the data. Then, merge these tables, calculate the scores, and sort the data to highlight the most critical updates.

This process scales seamlessly. While the example only involves six data entries, the same logic applies to organizing thousands of entries weekly. By automating with lookup tables and scripts, you can shift your focus from manual tracking to strategic analysis.

Conclusions

A Smarter Way to Manage Dynamic Data

By borrowing ideas from PageRank and leveraging domain knowledge, you can transform chaotic streams of key indicators into a structured, actionable workflow. Automation ensures that even large volumes of data remain manageable, letting you focus on decision-making instead of data wrangling.

So next time you’re buried in dynamic data, think about how you can rank and organize it—because smarter tools will translate into smarter decisions.